Selecting the right text analytics Natural Language Processing (NLP) tool, especially when you’re starting out, can be overwhelming due to all the choices that are available. It can be uncertain and hard to predict whether the choice you make will bring the best Return on Investment (ROI). Selection of the right NLP tool is crucial in achieving optimal performance and realising benefits.

Every organisation has their own requirements and they implement NLP with these requirements in consideration. Since different NLP tools incorporate different AI techniques they are better suited at different tasks, the NLP tool selected without such consideration might not perform as desired.

Related: A Guide -Text Analysis, Text Analytics & Text Mining

Factors to consider while selecting a NLP tool

Type of customer interaction

The type of customer interaction heavily influences the selection of the right NLP tool; How frequent, how long, in which channel and for what outcome are all factors. I typically see clients looking to leverage NLP text analysis for these areas of their Customer Experience program:

- Automation — Chatbots (Voice or Text based: eg, Call Centre, Smart Speaker, Website, Social Media: Facebook Messenger, Twitter etc)

- Business data analysis — deep dive investigations, recommendations on business changes and automation

- Question and Answer / FAQ

- Routing — Voice calls to the correct team

- Routing — Support tickets to the correct team

- Survey / Feedback analytics

- Sentiment Analysis and Customer Satisfaction

- Search or Knowledge retrieval from existing content

- Agent support and streamlining of performance

- Summarisation of customer interactions

Volume of customer conversations

Some organisations only handle hundreds of customer conversations per month whereas others might handle tens of thousands of customer conversations per month. The volume and distribution of training utterances affects the type of NLP technique you should employ.

With low volumes, you’ll be waiting forever to capture enough ‘real’ data; or you’ll use artificial (‘fake’) data to bootstrap the training models. In such cases it might be worth looking at rule-based NLP engines to meet your needs.

We often hear clients say they have hundreds of thousands of historical records. But in reality most of it contains out of date products and issues that just create noise and performance issues for their NLP models.

Managing a large number of Chatbot questions comes with its own challenges in ensuring overlap or miscategorisation is minimised for customer experience.

Complexity of customer enquiries

Depending on the size and sector of an organisation the complexity of customer interaction can vary. It can be simple with the areas of interest / categories being less than 5.

Let’s say you have just 2 categories: ‘sales’ and ‘service’; Well the NLP task of categorising is pretty straight forward; You could probably even do this with just keywords.

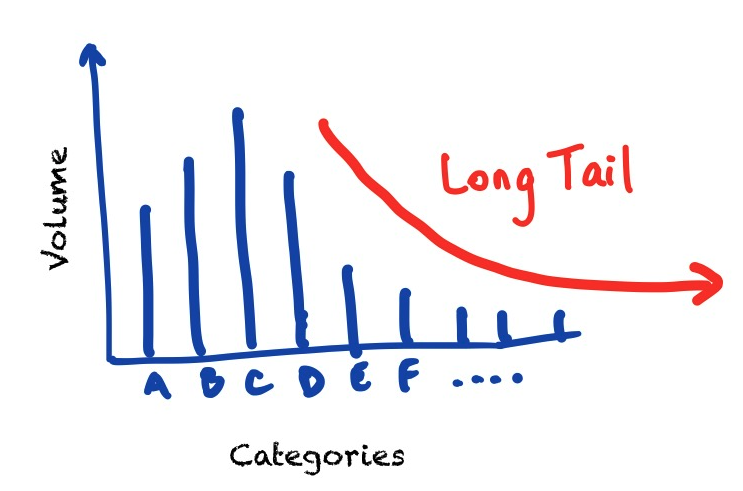

Where the number of categories (aka “buckets”) to select from are large, the task for the NLP platform to select the correct category becomes much harder with increased occurrences of ‘decoys’ (or ‘false accepts’ of one category for another)

A machine learning approach might seem the best for a large number of categories, but performance is then reliant on accurately labelled data (no overlap with other categories, or confusion with multiple inquiries within a single request) or sufficient samples to ensure there isn’t a dataset imbalance.

If your categories aren’t evenly balanced, with a “long tail”, you’re better off selecting a rule-based NLP platform.

Related: Customer Conversation Analysis Simplified: 3 Tools to Do It All

I’ve seen cases where subject matter experts within an organisation can’t agree on the category to bucket a customer enquiry into.

Richness of customer interactions

Richness of customer interaction being analysed refers to the amount of data elements (aka “slots”) contained within a customer’s message. This ranges from no additional information such as Q&A type enquiries “what are your opening hours?”, to low amounts of additional formation like a product/service “what’s the price of <product_x>?”. Complex cases include multiple pieces of data which a user might might provide all at once, like a flight booking needing date, time, origin, destination, number of tickets, type of tickets, etc

Each use case you explore for NLP will have differing levels of richness. Both your current needs and potential future needs should be considered when selecting the right NLP tool — or be ready to jump ship and move platforms.

In-house or outsource

(Thanks to Kate Brodie for flagging this additional consideration.)

Whether your organisation chooses to outsource or build internally should not only take into consideration of your current and future capabilities in the field of AI.

Explore whether the planned application of NLP is for your core business or supporting functions and whether it would be beneficial to outsource.

You might also consider outsourcing expertise as you build maturity and experience within your organisation.

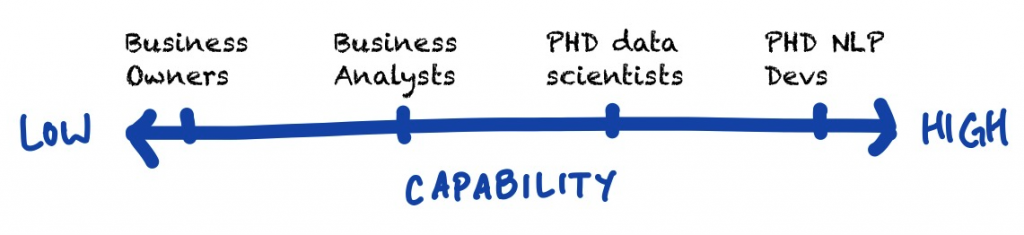

Your organisation’s capability

Organisation’s capability is another aspect that should be considered while selecting the best text analytics/NLP tool. Depending on the competence of the organisation, the NLP tool can be built by the in-house developers within the organisation. Organisations with skilled Data Scientists and NLP resources can undertake the analysis themselves. Similarly, organisations with subject matter experts (SMEs) and business analysts can be informed by such human resources to find the right text analytics tool. Finally, organisations can choose to outsource the analysis to independent experts such as Pure Speech Technology and get consultation/recommendation on selection of the right text analytics/NLP tool for them.

Consequences of selecting an ill fitting text analytics/NLP tool

So, what actually happens as a result of selecting a NLP that is not suitable for you? Well, that could result in the organisation not meeting the desired performance and not meeting the expected Return on Investment (ROI). The time, effort and resources spent on the NLP tool will be wasted, with the organisation being unable to make business improvements. An unfit text analytics/NLP tool creates negative experience for the customer, resulting in the customer seeking services from the competitors. Furthermore, even when the NLP tool has been deemed unfit, the organisation has to expend extra time and resources to explore new solutions and to implement the migration.

Challenges of selecting the right text analytics/NLP tool

Selecting the best text analytics/NLP tool can be an overwhelming task, especially for organisations that do not have the right skilled resources. It can also be expensive and a steep learning curve. With so many options to choose from it becomes confusing on which best for your organisation. Even with a trial or implementation, an organisation might not be sure whether the solution is offering the best performance (“is 80% accuracy as good as it gets with this tool?”).

4 paths to selecting the right NLP tool for your customer experience needs.

I recommend a few avenues to explore when selecting the best text analytics/NLP tool for your situation:

- trial and benchmark NLP tools yourself; You’ll need a reasonable dataset to benchmark with, and the skilled resources to undertake tests and analyse the results with limited time. You can even purchase benchmark reports such as the Text Analytics API guide 2020 on 34 different platforms.

- speak to NLP/NLU vendors and obtain proof they know how to solve your specific text analysis needs to deliver on the ROI you are targeting.

- connect with the community and reach out to peer individuals implementing NLP tools for similar situations.

- talk to independent industry experts like Pure Speech Technology who won’t have a biased view.

If you’re still unsure on what text analytics/NLP tool to use for your organisation, we’ve made it much simpler and created a short quiz to help you find the best text analytics/NLP tool to achieve optimal organisational performance. Our team of experts will lean on their decades of experience in the customer experience automation domain to provide you with a custom recommendation on which product(s) best suit you based on your needs